Amazon Bedrock AgentCore Master Index - A Hub for AgentCore Articles and Decision Patterns

First Published:

Last Updated:

A hub for the six existing Amazon Bedrock AgentCore articles on hidekazu-konishi.com, organized around a self-named AgentCore Deployment Ladder (L0–L4) that maps each AgentCore service to a concrete adoption step.

Table of Contents:

1. Introduction — Why a Hub for AgentCore

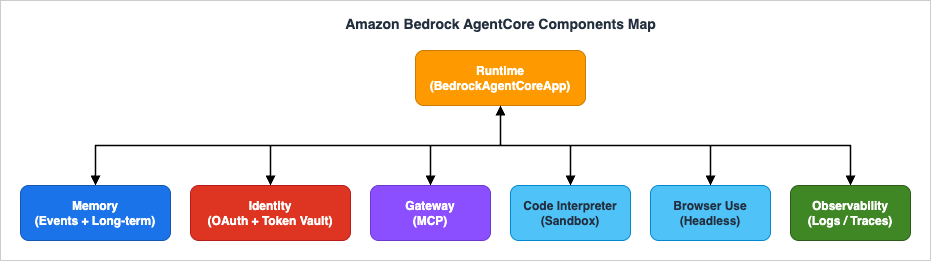

Amazon Bedrock AgentCore is not a single service. It is a family of seven managed services (Runtime, Memory, Identity, Gateway, Code Interpreter, Browser Use, and Observability) that you can adopt independently, in any order, around an existing AI agent stack. That composability is the point — and it is also why teams new to AgentCore often stall. The natural questions are: which service do I need first, which ones do I need at all, and in what order do I take on the operational burden of each?This article is a hub, not a tutorial. The six existing AgentCore articles on hidekazu-konishi.com already cover the concepts, hands-on patterns, security model, infrastructure-as-code, multi-agent orchestration, and production operations in depth. What was missing was a single starting point that organizes those six articles against a clear sequence of decisions: "if you are at point X, read article Y next."

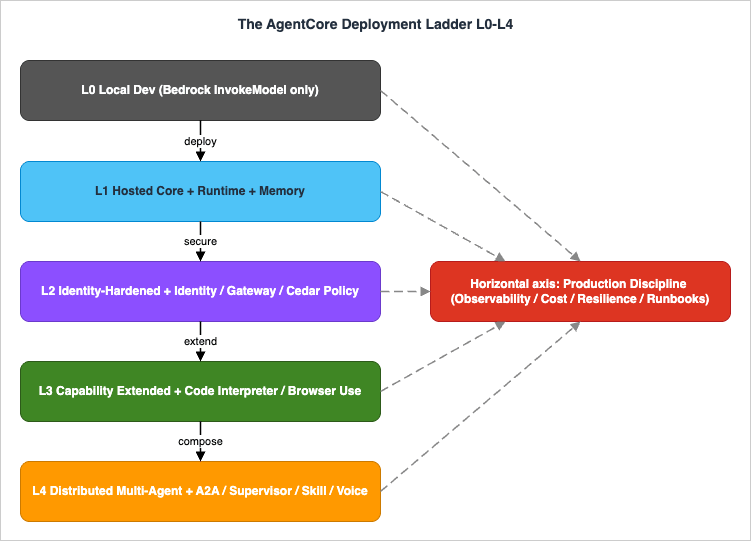

To make that sequence concrete, this hub introduces a self-named framework called The AgentCore Deployment Ladder. The Ladder names five levels of AgentCore adoption — L0 Local Dev, L1 Hosted Core, L2 Identity-Hardened Exposure, L3 Capability Extended, and L4 Distributed Multi-Agent — and pairs each level with the article that walks you through it. A horizontal axis, Production Discipline, runs across every level from L1 onward and is anchored by the Production Operations Guide. The Ladder is opinionated on purpose: it makes a clear recommendation about what to add next instead of presenting AgentCore as a flat menu.

If you read this hub end-to-end and then follow the recommended sequence through the linked articles, you will arrive at a production AgentCore deployment that you actually understand — every service in your architecture chosen for a reason, not because it shipped together with the others.

2. What is Amazon Bedrock AgentCore?

Amazon Bedrock AgentCore is the AWS managed runtime, state store, and security layer for production AI agents. Rather than replacing an agent framework like Strands Agents or LangGraph, AgentCore hosts the agent your framework defines, persists its conversation state, exposes its tools securely, executes its code in a sandbox, drives a browser when one is required, manages caller identity, and ships telemetry to CloudWatch — all without you running the servers underneath. It is designed to be framework-agnostic, model-agnostic, and composable: you adopt one service today, three tomorrow, and the others only if a real use case demands them.The single-sentence definition: AgentCore is the part of an AI agent platform that you would otherwise have to build yourself, packaged as seven independently consumable AWS services. It is the operational substrate, not the brain. The brain — the orchestration loop, the prompt design, the tool selection logic — still lives in your framework code, and that framework code can stay exactly as it was when AgentCore was added.

2.1 What AgentCore Is Not

It is just as useful to enumerate what AgentCore is not, because each of these confusions has wasted real engineering time.- AgentCore is not an LLM. It does not generate text. It calls Bedrock model APIs (or any other model your framework can reach), but the model is a separate dependency.

- AgentCore is not a single service. Every one of the seven components — Runtime, Memory, Identity, Gateway, Code Interpreter, Browser Use, Observability — can be adopted alone. You do not have to take all of it.

- AgentCore is not the successor to Amazon Bedrock Agents. Amazon Bedrock Agents (the older, opinionated agent-as-a-service offering) and AgentCore can coexist. AgentCore is the more flexible substrate for teams that have outgrown Bedrock Agents' fixed orchestration model or that need to bring a third-party framework.

- AgentCore is not a low-code product. You will be writing Python (or another supported language), defining tools, designing prompts, and reasoning about IAM. The platform removes infrastructure friction; it does not remove agent design.

2.2 The Three Pillars of AgentCore Value

When a colleague asks "why would I add AgentCore to a working prototype?", three concrete answers help.First, stateful sessions — Memory removes the work of running your own conversation store with both short-term event history and durable long-term knowledge. Second, secure tool exposure — the combination of Identity (OAuth and a token vault), Gateway (MCP-based tool exposure), and Cedar policy means your agent can safely act on a real user's behalf against third-party APIs without your team writing an identity broker. Third, production-grade hosting — Runtime gives you per-session microVM isolation, NDJSON-to-SSE streaming, and an HTTP/SSE invocation surface without you operating Kubernetes or ECS yourself. Each of those three pillars is months of engineering removed from your roadmap.

3. AgentCore Architecture Overview

The seven AgentCore services arrange themselves around a central hub: the Runtime. Every agent invocation lands at Runtime, which then talks to the other services as needed for the request. This shape is important to internalize because it changes how you reason about latency and failure: every other AgentCore service is reached from Runtime, and Runtime is the only AgentCore service the calling application sees.

3.1 The Seven Services at a Glance

The table below is the single most useful artifact in this hub. It is the answer to "what does each service own, and when do I need it?" — kept short on purpose so the table itself is scannable.| Service | Owns | Doesn't Own | When You Need It |

|---|---|---|---|

| Runtime | Per-session microVM hosting, scaling, SSE streaming, HTTP entrypoint | Agent business logic, prompts, model choice | Always (L1+) |

| Memory | Short-term event log, long-term memory namespaces | Vector knowledge bases (use Knowledge Bases for Bedrock) | L1+ — any conversational agent |

| Identity | Inbound OAuth 2.0 verification, outbound token vault for third-party APIs | Authorization decisions (Cedar does that) | L2+ — multi-user or third-party API calls |

| Gateway | MCP server endpoint that exposes tools to the agent | Tool implementations themselves | L2+ — when exposing tools to multiple agents |

| Code Interpreter | Per-session sandboxed Python execution | Persisting code across sessions | L3+ — when the agent needs to compute or analyze data |

| Browser Use | Per-session headless browser, with screenshot and DOM tools | API-based integrations | L3+ — when the target system has no API |

| Observability | Vended Logs, traces, GenAI Observability Dashboard | Business KPI dashboards | Production Discipline — every Level from L1 onward |

3.2 How the Services Compose

The composition rule is simple. Runtime is the host: it is what the calling application invokes. Identity sits in front of Runtime to verify the caller (inbound) and beside Runtime to obtain third-party tokens on the user's behalf (outbound). Gateway sits beside Runtime to expose tools through the Model Context Protocol (MCP), so the same tool can be used by one agent or many. Memory, Code Interpreter, and Browser Use are state and capability stores that Runtime reaches into per session. Observability sits underneath, capturing application logs and OpenTelemetry traces from every other service.The practical consequence is that the seven services have a natural adoption order even though they are technically independent. You almost never need Browser Use before you need Runtime. You almost never need Gateway before you need Identity. The next section names that order explicitly.

4. Implementation Decision Tree — The AgentCore Deployment Ladder

Rather than presenting AgentCore as a flat menu of seven services, this hub introduces a five-level adoption framework called The AgentCore Deployment Ladder. Each level adds a deliberate capability, has a clear graduation criterion, and points to the deep-dive article that walks you through it. A separate horizontal axis — Production Discipline — runs alongside all levels from L1 onward and is anchored by the Production Operations Guide.

4.1 The Five Levels

| Level | Name | Added Services | Solves | Primary Reference |

|---|---|---|---|---|

| L0 | Local Dev | (none — Bedrock InvokeModel only) | Idea validation, prompt iteration, model selection | Beginner's Guide |

| L1 | Hosted Core | + Runtime + Memory | "How do I keep this agent running without managing servers?" | Implementation Guide Part 1 |

| L2 | Identity-Hardened Exposure | + Identity + Gateway + Cedar Policy | "How do I safely let many users call it?" | Implementation Guide Part 2 |

| L3 | Capability Extended | + Code Interpreter + Browser Use | "How do I let it compute and act on systems without APIs?" | Implementation Guide Part 1 (Code Interpreter) / Part 4 (Browser Use) |

| L4 | Distributed Multi-Agent | + A2A / Supervisor / Skill / Voice Mode | "How do I scale beyond what one agent can hold in context?" | Implementation Guide Part 4 |

| Horizontal | Production Discipline | Observability / Cost control / Resilience / Runbooks | "How do I keep it up for months, not weeks?" | Production Operations Guide |

4.2 L0 — Local Dev

Where you are. Running a Strands Agent (or LangGraph, or LangChain) locally withpython my_agent.py. The agent calls Bedrock's InvokeModel API directly. There is no AgentCore Runtime container, no persistent memory across runs, no inbound HTTP — just an idea you can iterate on.What this level is for. L0 exists so you can prove the agent is worth productionizing before committing to the operational surface. Iterate on prompts here. Switch models here. Decide which tools the agent actually needs.

Graduation criterion. You can run a representative end-to-end task with acceptable quality, and the next constraint is no longer the agent design — it is "I need this to be reachable as an HTTP endpoint, and I need it to remember the previous turn."

Read next. The Beginner's Guide to learn the AgentCore service vocabulary, then Part 1 of the Implementation Guide for the actual deploy.

4.3 L1 — Hosted Core (+ Runtime + Memory)

What you add. Runtime and Memory. You wrap the agent code withBedrockAgentCoreApp and @app.entrypoint, deploy it to AgentCore Runtime, and configure a Memory resource for both short-term event history and long-term knowledge.Why these two together. Runtime without Memory means every conversation starts over. Memory without Runtime means you are hosting the agent yourself anyway. The pair is the smallest unit that makes sense to adopt together.

Graduation criterion. A user can connect, have a conversation of at least five turns, disconnect, reconnect a day later, and have the agent recall the earlier context. Streaming responses arrive token-by-token over SSE. The Runtime container redeploys cleanly via

direct_code_deploy or CDK.Read next. Part 2 of the Implementation Guide if you are about to open the agent to anyone outside your immediate team.

4.4 L2 — Identity-Hardened Exposure (+ Identity + Gateway + Cedar)

What you add. Identity for inbound JWT verification and the outbound token vault, Gateway to expose tools through MCP, and Cedar policy for authorization decisions.Why these three together. The threat model changes the moment a second person can call the agent. Identity proves who is calling. Gateway prevents the agent from being tightly coupled to a single tool implementation. Cedar policy makes the authorization decision auditable and deny-by-default. Adding them piecemeal usually leaves a gap an attacker can drive through.

Graduation criterion. A non-team-member can authenticate via Cognito, invoke the agent, and have the agent call a third-party API on their behalf — with the access token retrieved from the Identity token vault, scoped to that user, never written to logs, and authorized by an explicit

permit rule in your Cedar policy. The Cedar policy ran in LOG_ONLY mode for at least a week with no false positives before being switched to ENFORCE.Read next. Part 3 of the Implementation Guide when you stop wanting to deploy by hand.

4.5 L3 — Capability Extended (+ Code Interpreter + Browser Use)

What you add. Code Interpreter, which gives the agent a sandboxed Python environment for arbitrary computation, and Browser Use, which gives the agent a headless browser for any system that lacks an API.Why these two together. Both are capability extensions that let the agent do something it physically could not do before. Both follow the same per-session lifecycle pattern. Both are easy to misuse — Code Interpreter for trying to persist state across sessions (don't), Browser Use for trying to act on systems that absolutely should have an API contract (use the API if it exists).

Graduation criterion. The agent can take a user's CSV, analyze it inside Code Interpreter, return a chart, and (when needed) log into a legacy web app via Browser Use to file a ticket — all within the security boundary established at L2.

Read next. Part 4 of the Implementation Guide when one agent cannot hold the entire task in context.

4.6 L4 — Distributed Multi-Agent (+ A2A / Supervisor / Skill / Voice)

What you add. Multiple Runtimes composed together. The five orchestration patterns from Part 4 — A2A Protocol, Supervisor + Sub-agent, Direct boto3 Invocation, Skill System, and Voice Mode via Nova Sonic 2 — all live here.Why these patterns together. They are different ways to compose the same primitive: more than one AgentCore Runtime cooperating to satisfy one user request. Choosing among them is the work at this level, not adopting all of them.

Graduation criterion. A user request triggers a Supervisor agent that routes to specialist sub-agents based on intent; the specialists return; the Supervisor composes the answer; Guardrails Shadow Mode has been running in production long enough to give you confidence in the multi-agent quality before you would enforce it.

Read next. The Production Operations Guide if you have not been reading it in parallel already.

4.7 The Horizontal Axis — Production Discipline

Production Discipline is not a Ladder level. It is what you do at every level from L1 onward, in parallel. The Production Operations Guide is structured to be useful at L1 (where you mostly need basic logs and an alarm or two) and at L4 (where you need a full four-signal observability stack, vCPU-hour cost analysis, retry budgets, circuit breakers, and pre-written runbooks).Two pieces of advice from that guide are worth repeating in this hub because they are the most commonly skipped. First, set up the four-signal observability stack at L1, before you actually need it — empty dashboards on Day 1 of an incident are the most expensive form of "we'll add it later." Second, practice the runbooks before the incident — the team that has rehearsed a Cedar-policy rollback resolves it in minutes; the team reading the runbook for the first time during the outage takes hours.

5. Deep Dive Articles — Read in This Order

The six existing AgentCore articles are presented in two views below. The first view is linear — read them in numbered order from 1 to 6. The second view is need-based — jump to the Ladder level you are working on right now.5.1 Linear Reading Order

5.1.1 Start Here — Beginner's Guide

Article: Amazon Bedrock AgentCore Beginner's Guide — AI Agent Development from Basics with Detailed Term ExplanationsLadder Position: L0 prerequisite — read before any code.

Time Investment: ~60 minutes.

This is your starting point if AgentCore is new to you. It walks through what an AI agent is, what each of AgentCore's seven core services does, and how they fit together — with extensive term-by-term definitions so you can build a complete mental model before writing any code. Every concept that the Implementation Guide series later assumes is established here, including the seven-service map, the distinction between Bedrock Agents and AgentCore, and the framework-agnostic / model-agnostic design philosophy that motivates AgentCore's composability.

Read this even if you are already familiar with AI agents from a different platform. The naming and boundaries AgentCore uses are specific (for example, what counts as "Memory" versus "Knowledge Base", or what "Identity" specifically does inside AgentCore), and getting that vocabulary right early saves friction in the deeper articles.

5.1.2 Foundation Patterns — Implementation Guide Part 1

Article: Amazon Bedrock AgentCore Implementation Guide Part 1: Runtime, Memory, and Code Interpreter PatternsLadder Position: Primary reference for L1 (Hosted Core). Also covers Code Interpreter which lives at L3.

Time Investment: ~90 minutes plus hands-on time.

The hands-on starting point. Take a Strands Agent from local script to a hosted AgentCore Runtime, persist conversation state in Memory's two-tier store (short-term Events plus long-term Memories), and stream tokens back to the client via NDJSON-to-SSE. The

BedrockAgentCoreApp plus @app.entrypoint pattern introduced here is the deployment-agnostic surface that every later Part builds on — the same code can be deployed via direct_code_deploy during prototyping and via CDK in production without changing the entrypoint.This article also introduces the Code Interpreter session lifecycle (start, execute, stop). It treats Code Interpreter as a foundation rather than an L3 capability because the lifecycle pattern is the same as Runtime and Memory and is easier to learn in this context. When you read Part 4 and the Production Operations Guide later, the patterns from this article are assumed knowledge.

5.1.3 Security Layer — Implementation Guide Part 2

Article: Amazon Bedrock AgentCore Implementation Guide Part 2: Multi-Layer Security with Identity, Gateway, and PolicyLadder Position: Primary reference for L2 (Identity-Hardened Exposure).

Time Investment: ~75 minutes.

Defense-in-depth for AI agents, layered as authentication (Identity, Cognito JWT,

@requires_access_token), tool exposure (Gateway, MCP), and authorization (Cedar policy with forbid overrides permit semantics). The article walks through the three threats that are specific to agentic systems — token leakage through model output, privilege escalation across tools, and impersonation of one user by another's session — and shows how the three layers each close a distinct attack surface.The most important operational pattern in this article is the LOG_ONLY → ENFORCE transition for Cedar policy. Cedar's deny-by-default makes "wrote a rule slightly wrong and locked everyone out" a real risk, and running the policy in LOG_ONLY mode in production for at least a week before flipping to ENFORCE is the established path to avoiding that.

5.1.4 Infrastructure — Implementation Guide Part 3

Article: Amazon Bedrock AgentCore Implementation Guide Part 3: Building a 4-Stack CDK Architecture with an Observability PipelineLadder Position: The promotion path for any Ladder level from L1 upward. Sets up the infrastructure that everything else runs on.

Time Investment: ~120 minutes plus deployment time.

One

cdk deploy --all to production. A four-stack CDK pattern (FoundationStack, BedrockStack, AgentStack, ChatAppStack) separates infrastructure by change frequency: Cognito and the database move rarely, agent code changes daily, and putting them in the same stack is the most common cause of "I redeployed and now Cognito users were rotated." The article also wires up the observability pipeline — CloudWatch Vended Logs, X-Ray Transaction Search, and a Firehose delivery stream for cost and usage analysis — at the same time, so the agent goes live with telemetry already flowing.The end-state from this article is a deployment you can recreate in a new AWS account in roughly eleven minutes. That recreatability is what makes Production Discipline tractable: incident response is much faster when "I can rebuild this from a CDK app" is the rollback story.

5.1.5 Multi-Agent Orchestration — Implementation Guide Part 4

Article: Amazon Bedrock AgentCore Implementation Guide Part 4: Multi-Agent OrchestrationLadder Position: Primary reference for L4 (Distributed Multi-Agent). Also covers Browser Use which lives at L3.

Time Investment: ~90 minutes.

Five orchestration patterns presented side-by-side: A2A Protocol for standardized agent-to-agent communication, Supervisor plus Sub-agent for LLM-routed delegation, Direct boto3 invocation for low-level control, Skill System for progressive feature disclosure, and Voice Mode for conversational interfaces via Nova Sonic 2. The article's structure makes choosing between them mechanical — there is a comparison table that maps the choice to the use case (when to use A2A versus Supervisor, when Skill System is overkill, when Voice is appropriate).

Browser Use and LangGraph multi-agent workflows also live in this article. Browser Use is included here, not in Part 1, because the pattern of using it correctly involves multiple cooperating sessions and is easier to learn after Runtime and Memory are familiar. Guardrails Shadow Mode — the discipline of running content filters in observe-only mode in production before flipping them to enforcement — is also introduced here and feeds directly into Production Operations.

5.1.6 Production Operations — Production Operations Guide

Article: Amazon Bedrock AgentCore Production Operations Guide — Observability, Cost Optimization, and Disaster RecoveryLadder Position: Horizontal axis — applies in parallel to every Ladder level from L1 onward.

Time Investment: ~120 minutes; revisit in pieces during real incidents.

The operations manual for AgentCore agents in production. The article is organized around four signals — operational metrics, application logs, distributed traces, and quality evaluations — and argues that skipping the fourth (treating an agent like a stateless API and only watching the first three) is the most common mistake when porting traditional APM mental models onto agentic systems. Cost is decomposed into four buckets (model tokens, vCPU-hours, Memory storage, and tool calls) so you can attribute each line of an invoice to a specific deployment decision. Resilience is covered as a taxonomy of failure modes (throttling, timeout, content-filter rejection, model deprecation, regional outage) with named patterns (retry budget, circuit breaker, cross-region inference via CRIS, graceful degradation) for each.

Two sections are worth revisiting before any incident. The deployment-strategy section (Blue/Green, Canary, Shadow) is the playbook for rolling out a model version change without breaking conversations in flight. The incident-response section is a runbook design pattern — write the runbook before the on-call rotation needs it, not during the alert.

5.2 Need-Based Reading Order

If you already know which Ladder level you are working at, jump straight to the relevant article:- At L0, struggling to decide what AgentCore even does: → Beginner's Guide.

- At L0 → L1 transition, deciding to deploy: → Implementation Guide Part 1.

- At L1, deciding to open it to multiple users: → Implementation Guide Part 2.

- At L1 → L2 transition, replacing manual deploys with IaC: → Implementation Guide Part 3.

- At L2, ready to extend capability: → Implementation Guide Part 1 (Code Interpreter section) + Implementation Guide Part 4 (Browser Use section).

- At L3, hitting the single-agent ceiling: → Implementation Guide Part 4.

- At any level from L1 onward, making it survive a quarter: → Production Operations Guide.

6. Related AWS Services and Articles

AgentCore does not exist in isolation. The services it most often appears alongside on hidekazu-konishi.com are listed below.6.1 Within the AWS AI/ML Stack

Amazon Bedrock (the model layer). AgentCore runs the agent; Bedrock serves the model. Every AgentCore agent calls a Bedrock model (Claude, Nova, Titan, or third-party) via theInvokeModel / InvokeModelWithResponseStream APIs or the unified Converse / ConverseStream APIs. The choice of model has the largest single effect on cost (see the cost decomposition in the Production Operations Guide).Amazon Bedrock Knowledge Bases. Where AgentCore Memory holds conversational state, Knowledge Bases hold factual state. RAG over your documents lives in Knowledge Bases; what the user said two turns ago lives in Memory. Both can coexist in one agent.

Amazon Bedrock Guardrails. Content filtering and prompt-injection mitigation. The Shadow Mode pattern (from Part 4) is how you onboard Guardrails to a production AgentCore agent without false-positive risk.

AWS History and Timeline regarding Amazon Bedrock. A chronological reference of Bedrock and AgentCore evolution, with each milestone linked to its primary AWS source.

Amazon Bedrock Glossary. A dictionary-style article for term-by-term lookup covering foundation models, inference and throughput, the API surface, Knowledge Bases, Guardrails, Bedrock Agents, AgentCore, interoperability protocols, workflows and Studio, and customization and evaluation.

6.2 Supporting AWS Services

AWS Lambda. Many AgentCore tools are themselves Lambda functions exposed through Gateway's MCP server. The companion article MCP Server on AWS Lambda Complete Guide covers the MCP-on-Lambda pattern end-to-end.Amazon Cognito. The default identity provider for L2. Cognito issues the JWTs that AgentCore Identity verifies. Federation with enterprise IdPs (Microsoft Entra ID, Okta, Google Workspace) goes through Cognito Federation rather than AgentCore directly.

AWS WAF. When AgentCore is fronted by API Gateway or CloudFront, AWS WAF can enforce rate-based rules and the prompt-injection patterns described in AWS WAF for Generative AI — Prompt Injection Patterns. WAF is the outermost layer of a defense-in-depth model that Part 2 deepens at the application layer.

AWS Step Functions. For deterministic, auditable workflows that an agent must invoke, Step Functions remains the right tool. The hand-off pattern is: AgentCore decides what needs to happen; Step Functions executes the multi-step transactional flow.

Amazon CloudFront and AWS Amplify Hosting. The frontend (React, Vue, or vanilla) for an AgentCore agent typically deploys via Amplify Hosting or directly to S3+CloudFront. The CDK ChatAppStack from Part 3 covers this path.

6.3 Related Articles in This Series

For broader context on enterprise AI agent design (covering decisions that are partly above the AgentCore abstraction), see the three-part Enterprise AI Agent Design Notes series:- Enterprise AI Agent Design Notes Part 1

- Enterprise AI Agent Design Notes Part 2

- Enterprise AI Agent Design Notes Part 3

These notes cover decisions that AgentCore does not make for you — agent role design, evaluation strategy, organizational adoption — and that you will face whether you use AgentCore or not.

7. Frequently Asked Questions

7.1 Do I need AgentCore to use Bedrock?

No. AgentCore is optional. You can call Bedrock models directly from any application without ever touching AgentCore. AgentCore becomes useful when you want managed agent hosting, session memory, and secure tool exposure — that is, when the application is an agent rather than a one-shot LLM call.7.2 How does AgentCore differ from Amazon Bedrock Agents?

Amazon Bedrock Agents is an opinionated agent-as-a-service: you define action groups, attach a knowledge base, and Bedrock orchestrates the loop. AgentCore is the opposite — you bring your own orchestration (Strands, LangGraph, or your own code) and AgentCore provides the substrate (Runtime, Memory, Identity, etc.). Use Bedrock Agents when the built-in orchestration fits; use AgentCore when you need to bring a third-party framework or have outgrown the built-in model.7.3 Which framework should I use with AgentCore?

AgentCore is framework-agnostic by design. The two most common choices in 2026 are Strands Agents (an AWS-aligned, lightweight framework that pairs particularly cleanly with AgentCore Runtime) and LangGraph (when you want explicit state-machine semantics for multi-agent workflows). Either works. Part 1 uses Strands as the example; Part 4 shows the LangGraph integration.7.4 Can I adopt AgentCore one service at a time?

Yes — and you should. The Deployment Ladder in Section 4 is explicitly designed around this. Start at L1 with Runtime + Memory, add L2 (Identity + Gateway + Cedar) only when you have multiple users, add L3 capabilities only when the use case justifies them, and reach L4 only when one agent cannot hold the task. Adopting all seven services on Day 1 is the most expensive way to learn AgentCore.7.5 Where are AgentCore logs stored, and how long are they retained?

AgentCore application logs and traces are vended to Amazon CloudWatch Logs. Retention follows whatever retention policy you set on the log groups — the un-configured default is "Never Expire", which is effectively infinite and the wrong choice for cost, so set explicit retention as part of L1. The four-signal observability section of the Production Operations Guide covers this in depth.7.6 How do I estimate the cost of an AgentCore deployment?

Decompose the cost into four buckets: model tokens (largest variance, dominated by conversation length and model choice), vCPU-hours in Runtime (proportional to session duration, including idle time during streaming), Memory storage (the long-term namespace can grow unbounded — set TTLs), and tool invocation cost (varies by tool implementation). Per-bucket guidance is in the Cost Optimization section of the Production Operations Guide. This hub deliberately does not quote per-unit pricing — AgentCore pricing has changed multiple times since GA, and the canonical reference is the AWS pricing page rather than a blog snapshot.7.7 What is MCP and do I need it?

MCP (Model Context Protocol) is the standard protocol that AgentCore Gateway uses to expose tools to the agent. You do not call MCP directly — Gateway and your framework's MCP client handle it for you. You start needing MCP when you want the same tool to be usable by multiple agents (or external agents from other systems) rather than being hard-coded to one agent's Python file.7.8 What is the recommended way to deploy AgentCore to production?

AWS CDK with the four-stack pattern from Implementation Guide Part 3. The summary: FoundationStack (Cognito, DynamoDB, base IAM), BedrockStack (Guardrails, Memory resources), AgentStack (ECR + CodeBuild + Runtime), ChatAppStack (CloudFront + S3 frontend). Onecdk deploy --all brings up the whole environment in roughly eleven minutes and gives you a reproducible deployment that can be recreated in another account or Region cleanly.8. Summary

This hub argues for one specific way to adopt Amazon Bedrock AgentCore: as a ladder, not as a menu. Start at L0 with a local agent prototype, climb to L1 (Hosted Core) when the prototype proves out, climb to L2 (Identity-Hardened Exposure) when more than your immediate team will call it, climb to L3 (Capability Extended) only when the use case demands it, and reach L4 (Distributed Multi-Agent) only when a single agent's context becomes the bottleneck. Run the Production Discipline axis — observability, cost control, resilience, runbooks — in parallel from L1 onward, not as a deferred phase.The six existing AgentCore articles on hidekazu-konishi.com map onto this ladder directly. The Beginner's Guide establishes vocabulary. Implementation Guide Parts 1 through 4 walk you up the ladder from L1 to L4. The Production Operations Guide is the playbook for the horizontal axis. Read in numbered order, the six articles take you from "what is an agent" to "my production AgentCore deployment has been up for a quarter." Read in need-based order, each one is a self-contained reference for the level you are actually working at.

If you remember one thing from this hub: AgentCore's composability is its main feature and its main hazard. Use the Ladder to choose what to adopt next, and use the Production Operations Guide to keep what you adopted working. The seven services compose well — but only if you compose them deliberately.

9. References

9.1 The AgentCore Series on hidekazu-konishi.com

- Amazon Bedrock AgentCore Beginner's Guide — AI Agent Development from Basics with Detailed Term Explanations

- Amazon Bedrock AgentCore Implementation Guide Part 1: Runtime, Memory, and Code Interpreter Patterns

- Amazon Bedrock AgentCore Implementation Guide Part 2: Multi-Layer Security with Identity, Gateway, and Policy

- Amazon Bedrock AgentCore Implementation Guide Part 3: Building a 4-Stack CDK Architecture with an Observability Pipeline

- Amazon Bedrock AgentCore Implementation Guide Part 4: Multi-Agent Orchestration

- Amazon Bedrock AgentCore Production Operations Guide — Observability, Cost Optimization, and Disaster Recovery

9.2 Related Articles on hidekazu-konishi.com

- MCP Server on AWS Lambda Complete Guide

- AWS WAF for Generative AI — Prompt Injection Patterns

- Enterprise AI Agent Design Notes Part 1

- Enterprise AI Agent Design Notes Part 2

- Enterprise AI Agent Design Notes Part 3

9.3 AWS Official Documentation

- Amazon Bedrock AgentCore documentation

- Amazon Bedrock documentation

- Model Context Protocol specification

References:

Tech Blog with curated related content

Written by Hidekazu Konishi