CloudFront KeyValueStore and Edge Functions Cookbook: A/B Testing, Geo Routing, Feature Flags, and Token Validation

First Published:

Last Updated:

This article is a catalog of eight patterns that take advantage of that combination. Each pattern is a complete recipe — what the pattern is for, what KVS holds, what the function does, the JavaScript code, the operational caveats, and a quick cost note. The patterns are independent and you can adopt any subset, but they are presented in order of increasing complexity so that reading them sequentially gives you a reasonable mental model of where KVS fits and where it does not.

The article assumes you already know what CloudFront Functions and Lambda@Edge are at a high level. If you need a foundation on CloudFront, ACM, WAF, and Lambda@Edge as a stack, see the related article Add CloudFront WAF, Edge, ACM to a Custom Origin Like AWS Amplify Hosting.

Table of Contents:

- Introduction — Where KVS Fits in the Edge Compute Picture

- CloudFront KeyValueStore Basics

- CloudFront Functions vs. Lambda@Edge vs. KVS — A Decision Matrix

- Pattern 1: A/B Testing With KVS-Driven Cohort Assignment

- Pattern 2: Geographic Redirect Driven by a Country Mirror Map

- Pattern 3: URL Rewriter With a Dynamic Rule Table

- Pattern 4: Authentication Bypass List for Known Bots and Crawlers

- Pattern 5: Feature Flag Resolution at the Edge

- Pattern 6: Lightweight JWT Validation With KVS-Held Signing Keys

- Pattern 7: Cache Key Normalization

- Pattern 8: Rate-Limit Hint Via Edge Bucketing

- Operational Considerations

- Cost Analysis

- Summary

- References

1. Introduction — Where KVS Fits in the Edge Compute Picture

Edge compute on CloudFront has, until KVS, lived on a spectrum with three positions. CloudFront Functions sit at the fast, cheap, and constrained end: a 1 ms execution budget, a tiny JavaScript runtime, no network access, no filesystem, and a flat-rate price per million invocations that is roughly an order of magnitude lower than Lambda@Edge. Lambda@Edge sits at the powerful but expensive end: full Node.js or Python, several seconds of execution time, network access, and per-request billing closer to Lambda. Origin code sits everywhere else.The gap that this spectrum left was small but persistent — request-time decisions that depend on mutable data. A/B test assignments, feature flags, country-to-mirror mappings, and short URL rewrite tables all share the same shape: a small lookup table that the function needs to read on every request. Without KVS, the only practical options were to embed the table in the function code and redeploy on every change, or to call the origin (or a small backend) from Lambda@Edge and pay for the network round trip.

KVS closes that gap. It gives CloudFront Functions a per-edge, in-memory key-value lookup that resolves in microseconds, with a control-plane API that lets you update keys without redeploying functions. The functions can stay short and cheap, the data can stay mutable, and the deployment of code and the rotation of data become independent operations. This is the property the rest of the patterns in this article exploit.

1.1 What This Article Covers

The article has four parts. The first three sections explain KVS itself, the practical limits, and the choice between CloudFront Functions, Lambda@Edge, and KVS. Sections 4 through 11 are the eight patterns. Sections 12 and 13 cover cross-pattern operational concerns — KVS update propagation, deployment automation, and cost analysis. Section 14 distills the cross-pattern principles into a Summary, and section 15 is the references list.1.2 What This Article Does Not Cover

This is not an introduction to Lambda@Edge — that is assumed knowledge. It also does not cover CloudFront Distribution setup, WAF rules, or origin authentication; for that ground, see the related articles linked in §15. Quotas, latencies, and prices cited in this article reflect the AWS documentation as of 2026-04; re-confirm them against the official AWS documentation before depending on them in production.2. CloudFront KeyValueStore Basics

KVS is a regional-but-global control plane on top of an edge-replicated data plane. You create the store inus-east-1 through CloudFront APIs, write keys through a control-plane API, and the values are propagated to every edge location where any associated CloudFront Function runs. Reads from inside the function are local to the edge and treated as in-process lookups, with sub-millisecond latency in the typical case. Per the CloudFront Developer Guide, KVS reads do not count toward the 1 ms function compute budget that determines Function throttling — but they do accumulate as observable wall-clock time, so multiple sequential reads still add to end-to-end request latency (see §12.1 for the operational implications). Reads are billed separately from Function invocations — at the time of writing, $0.03 per 1 million KVS reads — but at that rate the cost stays subdominant to Function invocation charges for typical patterns. (See §13 for the full pricing breakdown.)2.1 The Practical Limits That Shape Every Pattern

The patterns in this article are constrained by a small number of hard limits. Treat the following as the working set you need to design around:| Limit | Value | What It Affects |

|---|---|---|

| Maximum total store size | 5 MB per KVS | How much data you can fit; the cap on table-driven patterns like geographic redirects |

| Maximum key size | 512 bytes (UTF-8) | Hash strategy for keys; long URLs must be hashed before storage |

| Maximum value size | 1 KB (1,024 bytes, UTF-8) | Encourages compact JSON, base64-encoded blobs, or hashed-only values |

| Maximum number of KVS per AWS account | 50 (default; soft limit, raise via Service Quotas) | Caps multi-tenant sharding strategies; plan namespacing within a smaller number of stores, or request a quota increase |

| Maximum number of Functions per KVS | 10 | Allows multiple Functions to share one store; useful for staging/prod isolation or splitting concerns across viewer-request and viewer-response |

| Number of KVS associations per Function | 1 | One Function reads from at most one KVS; multi-tenant patterns must namespace inside a single store |

| Functions runtime that can read KVS | cloudfront-js-2.0 | Older runtime cannot use the KVS API; you must opt into the 2.0 runtime |

| Update propagation time across edges | Typically a few seconds (no published SLO; re-verify against current AWS documentation before depending on a specific number) | Affects how aggressive your A/B and feature-flag patterns can be |

| Read latency from inside Functions | Sub-millisecond, treated as local | Allows multiple lookups per request without breaking the 1 ms budget |

Two of these limits are particularly load-bearing on pattern design. The 1 KB value cap is the reason every pattern in this article either stores compact JSON or hashes longer payloads before storage. The 5 MB total cap is the reason you cannot blindly load a full IP allow-list or a full URL-rewrite map; you must either keep the table small or shard across stores by use case — and the 50-per-account ceiling means even sharding has a finite headroom.

2.2 The KVS Read API From Inside CloudFront Functions

Runtime capabilities note: The CloudFront Functions JS 2.0 runtime exposes thecrypto module (createHash and createHmac with md5, sha1, sha256 only), the full Buffer module, TextDecoder/TextEncoder, and the global atob/btoa functions. RSA and EC signature verification (RS256, ES256, etc.) is not supported because crypto does not expose RSA/EC primitives — those require Lambda@Edge.Inside the function, the API is a thin promise-returning wrapper. You import the CloudFront helper module, open a handle to the associated KVS once at module top-level, and read keys with

await. A complete read pattern looks like this:import cf from 'cloudfront';

const kvsId = 'EXAMPLEa1b2c3d4-e5f6-7890-abcd-ef1234567890';

const kvs = cf.kvs(kvsId);

async function handler(event) {

const request = event.request;

try {

const value = await kvs.get('feature_flag_new_checkout');

if (value === 'on') {

request.headers['x-feature-new-checkout'] = { value: 'on' };

}

} catch (err) {

// Key not found or store not yet propagated — fail open.

}

return request;

}

The

kvs.get(key) returns the value as a string, or throws if the key is missing. The pattern of catching and continuing is intentional — KVS reads must always fail open in viewer-request handlers, because the alternative is dropping legitimate traffic when a deployment race or a propagation delay leaves a key briefly missing.2.3 Writing Keys From the Control Plane

Writes happen out-of-band through the CloudFront KeyValueStore API, not from inside the function. You can use the AWS CLI, the SDK, or any IaC tool that wraps the API. A representative AWS CLI write:aws cloudfront-keyvaluestore put-key \

--kvs-arn arn:aws:cloudfront::[ACCOUNT]:key-value-store/EXAMPLEa1b2c3d4-e5f6-7890-abcd-ef1234567890 \

--if-match "EXAMPLE-ETAG-0123456789" \

--key "feature_flag_new_checkout" \

--value "on"

The API uses optimistic concurrency through ETags — each control-plane

describe-key-value-store call returns the current ETag for the store, and any key-modifying call (put-key, delete-key, update-keys) must pass that ETag in --if-match or fail. ETags exist only on the control plane; key reads from inside the function (kvs.get()) do not return them, because the function-side API is purely a lookup. The control-plane ETag protects you from concurrent writers stomping on each other, which matters more than it sounds when the same KVS is updated from a CI pipeline, an operator script, and a dashboard.2.4 What KVS Is Not

KVS is not a general-purpose database. It does not support range queries, secondary indexes, transactions, or consistency stronger than eventual. Its consistency model is "all edges will converge in seconds, but you may briefly see different values from different viewer locations." Anything that requires strong consistency at request time — billing decisions, authoritative authentication, anti-replay — must run at the origin or use a different primitive entirely. The patterns in this article are explicitly designed around eventual consistency.3. CloudFront Functions vs. Lambda@Edge vs. KVS — A Decision Matrix

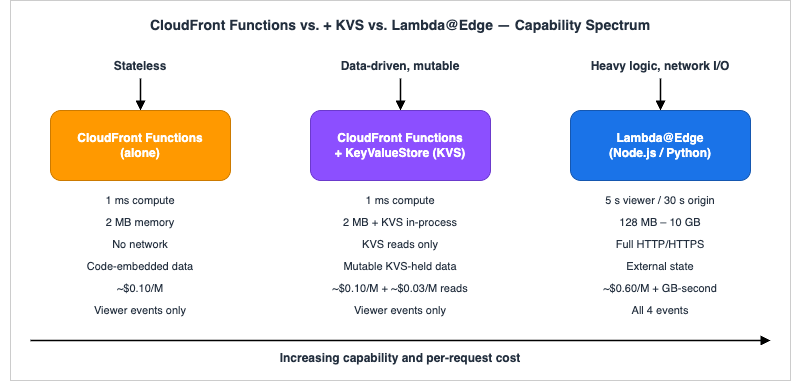

The decision between CloudFront Functions and Lambda@Edge has always been about latency, cost, and capability, but KVS shifts the cost-effective frontier of what a Function can do. The matrix below is the one I use when deciding where new edge logic should live. The visual that follows the matrix shows the three positions on the same spectrum, with KVS sitting between the stateless and the network-capable options.

| Concern | CloudFront Functions | CloudFront Functions + KVS | Lambda@Edge |

|---|---|---|---|

| Execution time budget | 1 ms | 1 ms (KVS read in-process) | Up to 5 s viewer / 30 s origin |

| Memory | 2 MB | 2 MB | viewer-request/viewer-response: up to 128 MB; origin-request/origin-response: up to 10,240 MB (10 GB), per Lambda standard quotas |

| Network access | None | KVS lookup only (in-process) | Full HTTP/HTTPS |

| Runtime | JavaScript (cloudfront-js-2.0) | JavaScript (cloudfront-js-2.0) | Node.js / Python |

| Trigger events | Viewer request, viewer response | Viewer request, viewer response | All 4 events |

| Per-million invocations | $0.10 | $0.10 (function) | $0.60 per million requests (Lambda@Edge viewer-request/viewer-response) (verified 2026-04 on aws.amazon.com/lambda/pricing/) |

| State storage | Code-embedded (immutable until redeploy) | KVS (mutable, second-level propagation) | External (DynamoDB Global Tables, etc.) |

| Best for | Stateless URL normalization, cheap header rewrites | Table-driven routing, flags, allow-lists | Heavy logic, third-party calls, body manipulation |

The shift KVS introduces is in the middle column. Many use cases that previously had to be Lambda@Edge — A/B test assignment that depends on a flag table, geo routing that depends on a country-to-host map, allow-listing that depends on a list — can now be done in a CloudFront Function for an order of magnitude less per million requests, provided the data fits in 5 MB. The remaining Lambda@Edge use cases are the ones that genuinely need network calls (cross-region database lookups, third-party service calls, KMS decrypt with keys not in the function), or that need to manipulate the request or response body, which CloudFront Functions cannot do.

A useful refinement: Lambda@Edge can also write to KVS through the standard SDK — the KVS data-plane API is regional in

us-east-1, so the SDK client must be instantiated with region: 'us-east-1' regardless of which Lambda@Edge replica region is executing the code. The Lambda@Edge execution role also needs explicit IAM permissions on the target KVS resource ARN (typically cloudfront-keyvaluestore:DescribeKeyValueStore, cloudfront-keyvaluestore:PutKey, cloudfront-keyvaluestore:DeleteKey, and cloudfront-keyvaluestore:UpdateKeys); without these, the SDK call will fail with AccessDeniedException at runtime even though the function is otherwise wired up correctly. A common pattern is to have a viewer-response Lambda@Edge function maintain a hot keyset that a separate viewer-request CloudFront Function then reads. The CloudFront Function does the cheap, frequent work; the Lambda@Edge does the periodic, expensive work that updates the table. Be deliberate about write frequency, though: KVS write API calls are billed per request at a rate well above the per-read price (verify the current write-API rate on aws.amazon.com/cloudfront/pricing/ before designing high-frequency write patterns), so writing on every viewer-response request is rarely sensible — sample, batch, or throttle.Prerequisite for the patterns that follow — CloudFront viewer headers must be enabled on the distribution: Several patterns in §4–§11 read CloudFront-managed viewer headers such as

cloudfront-viewer-country (Pattern 2, Pattern 8), cloudfront-viewer-address (Pattern 1, Pattern 4, Pattern 8), or forwarded cookies like ab_id (Pattern 1). These are not available to Functions by default — CloudFront only exposes them when the associated cache policy or origin request policy on the behavior is configured to forward them. The simplest path is to attach the AWS-managed origin request policy Managed-AllViewer (or Managed-AllViewerExceptHostHeader for custom origins that fail on a forwarded Host) on the cache behavior the Function is associated with; alternatively, create a custom policy and explicitly include each cloudfront-viewer-* header you need. If the header is absent in the policy, the corresponding lookup in the function silently returns undefined and the pattern degrades to its fallback path — functional but with the country/IP/cookie-aware logic disabled. Verify the policy attachment first when a pattern appears to "do nothing" in production.4. Pattern 1: A/B Testing With KVS-Driven Cohort Assignment

Heads-up before copying any of the snippets in sections 4–11: everycf.kvs('EXAMPLEa1b2c3d4-e5f6-7890-abcd-ef1234567890') call and every --kvs-arn ...:EXAMPLEa1b2c3d4-e5f6-7890-abcd-ef1234567890 CLI invocation uses an example KVS resource ID. Replace it with the actual KVS resource ID returned by aws cloudfront list-key-value-stores (or the value shown in the CloudFront console after the store is created). The function will silently fail to associate with the store if the example value is left in place, with no error in the function code itself — the misconfiguration only surfaces at request time as a missing-key behavior. Likewise, replace the example ETag value EXAMPLE-ETAG-0123456789 with the real ETag returned by describe-key-value-store when issuing --if-match writes.A/B testing at the edge is the canonical KVS use case. The function decides which cohort a viewer belongs to and either rewrites the URI to a variant path, sets a header for the origin to consume, or sets a cookie that downstream code reads. The wrinkle is that the assignment rule itself — "Treatment B is 30% of traffic, treatment C is 10%, the rest get control" — needs to change without a function redeploy, which is exactly the constraint KVS solves.

4.1 The Data Layout

Store one key per experiment, with a compact JSON value describing the splits. A representative entry:{

"experiment": "checkout_v2",

"splits": [

{ "cohort": "control", "weight": 60, "uri_prefix": "/checkout" },

{ "cohort": "variant_b", "weight": 30, "uri_prefix": "/checkout-v2" },

{ "cohort": "variant_c", "weight": 10, "uri_prefix": "/checkout-v3" }

],

"active": true

}

The 1024-byte value cap is comfortable here unless the experiment has many cohorts. If it does, split it across multiple keys (

exp:checkout_v2:meta, exp:checkout_v2:splits).4.2 The Function

import cf from 'cloudfront';

import crypto from 'crypto';

const kvs = cf.kvs('EXAMPLEa1b2c3d4-e5f6-7890-abcd-ef1234567890');

function pickCohort(splits, viewerKey) {

// Stable hash so the same viewer always lands in the same cohort.

const hash = crypto.createHash('sha256').update(viewerKey).digest('hex');

const bucket = parseInt(hash.substring(0, 8), 16) % 100;

let cumulative = 0;

for (const split of splits) {

cumulative += split.weight;

if (bucket < cumulative) return split;

}

return splits[splits.length - 1];

}

async function handler(event) {

const request = event.request;

const cookies = request.cookies || {};

// Use a stable viewer key: existing AB cookie, then session, then IP+UA hash.

const viewerKey =

(cookies['ab_id'] && cookies['ab_id'].value) ||

request.headers['cloudfront-viewer-address']?.value ||

request.headers['user-agent']?.value ||

'anonymous';

try {

const raw = await kvs.get('exp:checkout_v2');

const exp = JSON.parse(raw);

if (!exp.active) return request;

const split = pickCohort(exp.splits, viewerKey);

request.headers['x-ab-cohort'] = { value: split.cohort };

request.uri = request.uri.replace(/^\/checkout/, split.uri_prefix);

} catch (err) {

// Experiment not configured or KVS missing — control path.

}

return request;

}

Two design choices in this function bear calling out. First, the viewer key is chosen from a fallback chain rather than a single source — production traffic includes a wide spread of clients, and you want the same viewer to land in the same cohort across requests even when one of the inputs (the cookie, the IP) is missing. Second, the JSON parse is wrapped in a try/catch and the failure path is "no experiment, do nothing" — the function never blocks legitimate traffic because of a malformed key.

Cache/Origin Request Policy requirement:

request.cookies is only populated for cookies that the cache policy or origin request policy attached to the distribution explicitly forwards. Without forwarding ab_id in the cache key (or at minimum in origin requests), cookies['ab_id'] reads as undefined on every request and cohort assignment silently falls through to the IP/UA fallback. Verify the policy before relying on cookie-based stickiness, and remember that adding the cookie to the cache key fragments the cache by viewer — usually acceptable for the homepage, rarely acceptable for static assets.4.3 Operational Notes

The propagation delay between writing a new split and seeing it at every edge is on the order of seconds. This is fine for ramp-ups ("move 10% to 30%") but it means you cannot use this pattern to instantly stop an experiment globally — the recommended kill switch is a separateactive: false field on the same key, set through a single API call, which propagates within the same window. The cost picture: each request pays one Function invocation plus one KVS read, both billed at low per-million rates ($0.10/M and $0.03/M respectively at current pricing); a 30 ms cohort-assignment Lambda@Edge replaced by this pattern saves roughly the difference in per-million pricing on every viewer request, even after the KVS read fee.5. Pattern 2: Geographic Redirect Driven by a Country Mirror Map

Routing viewers to a country-specific origin or path is a classic edge use case, and the CloudFrontcloudfront-viewer-country header gives you the country at no extra cost. What changes with KVS is that the country-to-mirror map becomes data rather than code, which matters when you operate in dozens of countries and want product or marketing to update the map without involving infrastructure.5.1 The Data Layout

One key per country code, value is the destination prefix or full URL. Keys are short, so the 512-byte key cap is not a concern, and values are short so the 1 KB value cap is not either. Total store size scales linearly with the number of countries, which for global commerce is at most a few hundred entries — well inside the 5 MB cap.Key: geo:JP Value: https://www.example.co.jp Key: geo:DE Value: https://www.example.de Key: geo:US Value: https://www.example.com Key: geo:* Value: https://www.example.com (default fallback)

The wildcard

geo:* key holds the default for countries that do not have a specific mirror. Storing this in KVS rather than hardcoding it lets you change the default without a deploy.5.2 The Function

import cf from 'cloudfront';

const kvs = cf.kvs('EXAMPLEa1b2c3d4-e5f6-7890-abcd-ef1234567890');

async function handler(event) {

const request = event.request;

const headers = request.headers;

const country = (headers['cloudfront-viewer-country']?.value || '').toUpperCase();

if (!country) return request;

if (request.uri !== '/' && request.uri !== '/index.html') return request;

let mirror;

try {

mirror = await kvs.get(`geo:${country}`);

} catch (_) {

try { mirror = await kvs.get('geo:*'); } catch (_) { return request; }

}

return {

statusCode: 302,

statusDescription: 'Found',

headers: {

location: { value: mirror },

'cache-control': { value: 'no-store' }

}

};

}

The function only redirects on the homepage to avoid breaking deep links, which is a typical product requirement.

cache-control: no-store is critical on the response — without it CloudFront would happily cache the redirect and start serving the German redirect to Japanese viewers when the cache key does not include the country header.5.3 Operational Notes

If you do want the redirect cached per country, you must addcloudfront-viewer-country to the cache key in the cache policy. Otherwise leave the response uncacheable and let the function run on every homepage request — at CloudFront Functions pricing, the cost is negligible compared to the operational simplicity. Updating the map is a single put-key per changed country, with no function or distribution redeploy.6. Pattern 3: URL Rewriter With a Dynamic Rule Table

Rewriting URLs at the edge — short URLs, vanity paths, legacy URL preservation after a site rebuild — is commonly done with a function that holds the rewrite table in source. The downside is that every new short URL or every legacy redirect requires a deploy. With KVS, the table moves out of code and the deploy cadence drops to "whenever the function logic itself changes."6.1 The Data Layout

The natural shape is one key per source path, with the value being either the destination path or a small JSON object that adds a status code:

Key: rw:/promo/spring Value: {"to":"/promotions/2026-spring","status":301}

Key: rw:/old-pricing Value: {"to":"/pricing","status":301}

Key: rw:/r/ai-2026 Value: {"to":"/blog/2026-ai-recap","status":302}

When the source path can be longer than 512 bytes (long marketing URLs, deep legacy paths), hash the source path with SHA-256 and use the hex digest as the key. The function does the same hashing on the request URI before lookup.

6.2 The Function

import cf from 'cloudfront';

const kvs = cf.kvs('EXAMPLEa1b2c3d4-e5f6-7890-abcd-ef1234567890');

async function handler(event) {

const request = event.request;

const key = `rw:${request.uri}`;

let raw;

try {

raw = await kvs.get(key);

} catch (_) {

return request; // No rewrite — let it pass through.

}

let rule;

try { rule = JSON.parse(raw); } catch (_) { return request; }

const status = rule.status || 301;

return {

statusCode: status,

statusDescription: status === 301 ? 'Moved Permanently' : 'Found',

headers: {

location: { value: rule.to },

'cache-control': { value: 'public, max-age=300' }

}

};

}

A 5-minute

cache-control lets CloudFront cache the rewrite response itself, which means most viewers get the redirect without ever invoking the function — a significant cost saving on popular short URLs.6.3 Operational Notes

Rewrite tables grow. Keep an automated audit that compares the active key list to the canonical source (a Git-managed JSON file, a marketing system, etc.) and removes orphaned entries. Without this, the 5 MB cap will eventually bite, and unlike a function deploy, KVS does not give you a clean "redeploy with the new table" mechanic — you have to enumerate, diff, and apply individual writes.7. Pattern 4: Authentication Bypass List for Known Bots and Crawlers

Rate-limiting and authentication challenges at the edge often need an exception list — the SEO crawler that should never be challenged, the monitoring service that polls every minute, the partner integration that uses a service account from a known IP. Hardcoding this list in the function works at small scale but fails as the list grows past a few dozen entries and starts changing weekly.7.1 The Data Layout

Two complementary representations work well, depending on whether you bypass on user-agent or on IP. For user-agent matching, store a key per known UA token:Key: ua_allow:GoogleBot Value: 1 Key: ua_allow:Bingbot Value: 1 Key: ua_allow:UptimeRobot Value: 1

For IP allow-listing, store one key per CIDR with a comment value for auditability:

Key: ip_allow:198.51.100.0/24 Value: {"owner":"partner-acme","note":"prod"}

Key: ip_allow:203.0.113.42/32 Value: {"owner":"monitoring","note":"pingdom"}

CIDR matching in the function is more complex than exact-match, so a common simplification is to hash full /32 IPs as keys and let an out-of-band job expand CIDRs into individual /32s when writing. This trades store size for read simplicity.

7.2 The Function

import cf from 'cloudfront';

const kvs = cf.kvs('EXAMPLEa1b2c3d4-e5f6-7890-abcd-ef1234567890');

function tokenizeUA(ua) {

// Extract the leading product token (e.g., "GoogleBot" from full UA).

const match = (ua || '').match(/^([A-Za-z][A-Za-z0-9_-]+)/);

return match ? match[1] : '';

}

// cloudfront-viewer-address formats: "198.51.100.10:46532" (IPv4) or

// "[2001:db8::1]:46532" (IPv6). A naive split(':') corrupts IPv6.

function extractViewerIp(rawValue) {

if (!rawValue) return '';

if (rawValue.startsWith('[')) {

const end = rawValue.indexOf(']');

return end > 0 ? rawValue.slice(1, end) : '';

}

const colon = rawValue.lastIndexOf(':');

return colon > 0 ? rawValue.slice(0, colon) : rawValue;

}

async function handler(event) {

const request = event.request;

const headers = request.headers;

const ua = headers['user-agent']?.value || '';

const ip = extractViewerIp(headers['cloudfront-viewer-address']?.value);

const isIPv6 = ip.includes(':');

const uaToken = tokenizeUA(ua);

const checks = [];

if (uaToken) checks.push(kvs.get(`ua_allow:${uaToken}`));

if (ip) checks.push(kvs.get(`ip_allow:${ip}/${isIPv6 ? 128 : 32}`));

const results = await Promise.allSettled(checks);

const allowed = results.some(r => r.status === 'fulfilled');

if (allowed) {

request.headers['x-bypass-auth'] = { value: '1' };

}

return request;

}

The function uses

Promise.allSettled so a missing key in either lookup does not short-circuit the other. The downstream origin (or a subsequent Lambda@Edge) reads x-bypass-auth and skips the challenge.7.3 Operational Notes

This pattern is fail-secure (also called fail-closed) by design — a missing key means "no bypass," so the auth layer continues to protect the origin even if KVS is briefly unreachable. The opposite pattern — fail-open, where the bypass header is set whenever the KVS read fails — would let attackers replay a known bot UA to skip your auth layer. Always have the bypass be additive on top of the normal auth path, never a replacement.8. Pattern 5: Feature Flag Resolution at the Edge

Edge feature flags — turning a new homepage layout on for 10% of users, gating a beta API, suppressing a deprecated endpoint — overlap with A/B testing but have a different lifecycle. A/B tests are scoped to a handful of long-running experiments; feature flags can number in the hundreds and change daily. The data layout has to scale to that cardinality without blowing the 5 MB cap or making KVS reads quadratic in the flag count.8.1 The Data Layout

Group flags by surface area, one key per surface, and let the value be a compact JSON map:

Key: flags:checkout

Value: {"new_addr":true,"oneclick":false,"reorder_btn":true}

Key: flags:homepage

Value: {"hero_v3":true,"sticky_promo":false}

Grouping keeps the read count down — for any given route the function reads at most one or two keys instead of one per flag. The grouping key is usually the team or product surface that owns the flags, which also matches who has write access in the IAM policy on the KVS.

8.2 The Function

import cf from 'cloudfront';

const kvs = cf.kvs('EXAMPLEa1b2c3d4-e5f6-7890-abcd-ef1234567890');

function surfaceFor(uri) {

if (uri.startsWith('/checkout')) return 'checkout';

if (uri.startsWith('/api')) return 'api';

return 'homepage';

}

async function handler(event) {

const request = event.request;

const surface = surfaceFor(request.uri);

let flags = {};

try {

flags = JSON.parse(await kvs.get(`flags:${surface}`));

} catch (_) { /* fail open: no flags on */ }

for (const [name, on] of Object.entries(flags)) {

if (on === true) {

request.headers[`x-flag-${name}`] = { value: 'on' };

}

}

return request;

}

The origin reads the

x-flag-* headers and branches accordingly. This split keeps the edge function generic — the flag list lives in KVS, the flag behavior lives in the origin code that knows what each flag means. Adding a new flag requires no edge change at all.8.3 Operational Notes

Two pitfalls trip up teams new to this pattern. The first is treating KVS flags as authoritative for security gates — they are not. KVS is eventually consistent and globally cached, so a flag flipping off for security reasons can take several seconds to propagate. Always pair the edge flag with an origin-side check for anything security-sensitive. The second is letting the flag map grow without ceremony. The 1 KB value cap fits roughly 30–60 flags per surface depending on name length; past that you either split surfaces further or rotate to a separate flag service called from Lambda@Edge.9. Pattern 6: Lightweight JWT Validation With KVS-Held Signing Keys

JWT validation at the edge comes in two distinct flavors depending on which runtime you choose. CloudFront Functions (runtime:cloudfront-js-2.0) run within the 1 ms compute budget, use the KVS API natively, and expose crypto.createHmac with md5/sha1/sha256 only — which limits signature verification to HMAC-SHA256 (HS256). RSA and EC algorithms (RS256, ES256, etc.) and longer SHA variants (HS384, HS512) cannot be verified in this runtime regardless of Buffer availability. Lambda@Edge runs in a full Node.js environment with the standard crypto module and richer JWT libraries, supports asymmetric verification, but cannot access KVS directly and requires a separate key-storage mechanism such as DynamoDB, Secrets Manager, or S3. Choose the variant that matches your runtime constraints: Variant A for low-latency edge gating with HS256 tokens, Variant B when you need asymmetric algorithms (RS256, ES256) or more complex claim validation.9.1 The Data Layout

Store one key per active key ID (kid), with the value being the HMAC secret (within the 1 KB value cap). For symmetric HS256 signing, KVS works well; key rotation is a single put-key call with no function redeploy. RSA or EC public keys are too large for KVS values and require Lambda@Edge (Variant B):Key: jwt_key:2026-04 Value: <base64url-encoded HS256 secret> Key: jwt_key:2026-03 Value: <previous key, kept during rotation overlap>

Keep at least two overlapping keys during rotation windows so tokens signed with the previous key continue to validate.

9.2 Variant A: CloudFront Functions (cloudfront-js-2.0, HS256 only)

Runtime:cloudfront-js-2.0 — required to access the KVS API. This runtime exposes crypto.createHmac with md5/sha1/sha256 only, the Buffer module, TextDecoder, and global atob/btoa. The base64url decode below uses atob+TextDecoder for clarity; an equivalent Buffer.from(b64, 'base64').toString('utf8') implementation also works in this runtime. Only HS256 is supported among JWT signature algorithms because crypto exposes neither RSA/EC primitives nor SHA-384/SHA-512 — HS384, HS512, RS256, and ES256 all require Variant B.// Variant A: CloudFront Functions (cloudfront-js-2.0)

// Runtime: cloudfront-js-2.0

// Key storage: CloudFront KVS (cf.kvs())

// Algorithm: HS256 only (crypto exposes md5/sha1/sha256 — no RSA/EC)

import cf from 'cloudfront';

import crypto from 'crypto';

const kvs = cf.kvs('EXAMPLEa1b2c3d4-e5f6-7890-abcd-ef1234567890');

// base64url decode using atob() — available in cloudfront-js-2.0 runtime

function b64urlDecodeToString(input) {

const pad = input.length % 4;

const b64 = (input + '==='.slice(0, pad === 0 ? 0 : 4 - pad))

.replace(/-/g, '+').replace(/_/g, '/');

// atob returns a binary string; decode UTF-8 with TextDecoder

const binStr = atob(b64);

const bytes = Uint8Array.from(binStr, c => c.charCodeAt(0));

return new TextDecoder().decode(bytes);

}

async function handler(event) {

const request = event.request;

const auth = request.headers['authorization']?.value || '';

const m = auth.match(/^Bearer\s+(\S+)$/);

if (!m) return unauthorized();

const parts = m[1].split('.');

if (parts.length !== 3) return unauthorized();

const header = JSON.parse(b64urlDecodeToString(parts[0]));

if (header.alg !== 'HS256') return unauthorized();

let secret;

try {

secret = await kvs.get(`jwt_key:${header.kid}`);

} catch (_) {

return unauthorized();

}

const signingInput = `${parts[0]}.${parts[1]}`;

const expected = crypto.createHmac('sha256', secret)

.update(signingInput).digest('base64')

.replace(/=+$/, '').replace(/\+/g, '-').replace(/\//g, '_');

if (expected !== parts[2]) return unauthorized();

const claims = JSON.parse(b64urlDecodeToString(parts[1]));

if (claims.exp && Date.now() / 1000 > Number(claims.exp)) return unauthorized();

request.headers['x-jwt-sub'] = { value: String(claims.sub || '') };

return request;

}

function unauthorized() {

return {

statusCode: 401,

statusDescription: 'Unauthorized',

headers: { 'cache-control': { value: 'no-store' } }

};

}

9.3 Variant B: Lambda@Edge (Node.js runtime, flexible algorithms)

Runtime: Node.js (Lambda@Edge). Lambda@Edge does not have access to the CloudFront KVS API —cf.kvs() is unavailable. Signing keys must be fetched from an alternative store such as AWS Secrets Manager, DynamoDB, or an S3 object cached in the Lambda execution environment. The tradeoff is full Node.js API availability (including Buffer.from()), support for asymmetric algorithms via external JWT libraries (e.g., jsonwebtoken), and several seconds of execution budget — at the cost of higher per-invocation pricing compared to CloudFront Functions.// Variant B: Lambda@Edge (Node.js runtime)

// Runtime: Node.js 20.x (Lambda@Edge, viewer-request event)

// Key storage: AWS Secrets Manager (KVS is NOT available in Lambda@Edge)

// Algorithm: HS256 shown; extend with jsonwebtoken for RS256/ES256

const { SecretsManagerClient, GetSecretValueCommand } = require('@aws-sdk/client-secrets-manager');

// Lambda@Edge does NOT support environment variables, so the region must be

// hard-coded or derived at runtime (e.g., from process.env.AWS_REGION, which

// Lambda sets automatically to the executing edge region). The secret store

// region is independent of where the function executes; for low latency,

// replicate the secret to multiple regions via Secrets Manager replication

// and choose the closest at runtime, then rely on the module-level cache

// below to amortize the call across warm invocations.

const sm = new SecretsManagerClient({ region: process.env.AWS_REGION || 'us-east-1' });

let cachedKeys = null; // module-level cache; survives warm invocations

async function loadKeys() {

if (cachedKeys) return cachedKeys;

const res = await sm.send(new GetSecretValueCommand({ SecretId: 'jwt-signing-keys' }));

cachedKeys = JSON.parse(res.SecretString); // { "2026-04": "<secret>", "2026-03": "<secret>" }

return cachedKeys;

}

function b64urlDecode(input) {

const pad = input.length % 4;

const s = (input + '==='.slice(0, pad === 0 ? 0 : 4 - pad))

.replace(/-/g, '+').replace(/_/g, '/');

return Buffer.from(s, 'base64'); // Buffer.from() is available in Node.js

}

const crypto = require('crypto');

exports.handler = async (event) => {

const request = event.Records[0].cf.request;

const auth = (request.headers['authorization'] || [{}])[0].value || '';

const m = auth.match(/^Bearer\s+(\S+)$/);

if (!m) return unauthorized();

const parts = m[1].split('.');

if (parts.length !== 3) return unauthorized();

const header = JSON.parse(b64urlDecode(parts[0]).toString('utf8'));

if (header.alg !== 'HS256') return unauthorized();

const keys = await loadKeys();

const secret = keys[header.kid];

if (!secret) return unauthorized();

const signingInput = `${parts[0]}.${parts[1]}`;

const expected = crypto.createHmac('sha256', secret)

.update(signingInput).digest('base64')

.replace(/=+$/, '').replace(/\+/g, '-').replace(/\//g, '_');

if (expected !== parts[2]) return unauthorized();

const claims = JSON.parse(b64urlDecode(parts[1]).toString('utf8'));

if (claims.exp && Date.now() / 1000 > Number(claims.exp)) return unauthorized();

request.headers['x-jwt-sub'] = [{ key: 'x-jwt-sub', value: String(claims.sub || '') }];

return request;

};

function unauthorized() {

return { status: '401', statusDescription: 'Unauthorized',

headers: { 'cache-control': [{ key: 'Cache-Control', value: 'no-store' }] } };

}

9.4 Choosing Between Variant A and Variant B

Variant A (CloudFront Functions) executes within the 1 ms compute budget and charges at the CloudFront Functions rate (~$0.10/million invocations) — optimal for high-traffic gating where HS256 is acceptable and key rotation via KVS is sufficient. Variant B (Lambda@Edge) supports the full Node.js ecosystem, enabling RS256/ES256 verification and richer claim validation, but runs at Lambda@Edge pricing (~$0.60/million viewer-request invocations) and requires a separate key-storage solution since KVS is unavailable. For most token-gating use cases, Variant A is the right starting point; migrate to Variant B only when asymmetric algorithm support or additional claim logic is required.9.5 Operational Notes

Which variant to deploy: Use Variant A if your token issuer supports HS256 and you can rotate symmetric secrets via the KVS control-plane API. Use Variant B if you need RS256/ES256, want to use a standard JWT library, or require claim validation beyondalg, kid, exp, and sub.This pattern is for gating — keeping unauthenticated traffic off your origin — not for authorization. The origin must still verify the same token (or a session derived from it) before granting access to protected resources. The benefit of Variant A is that obviously invalid tokens are rejected at the edge for the cost of one CloudFront Function invocation. For asymmetric algorithms like RS256 or ES256 at the edge, use Variant B (Lambda@Edge) — the in-Function

crypto API in cloudfront-js-2.0 does not support RSA or EC key operations.10. Pattern 7: Cache Key Normalization

CloudFront caches by a key derived from URI, query string, and selected headers. Subtle differences in how clients present the same logical resource — uppercase host, mixed case query parameter names, harmless duplicated parameters, optional UTM tracking parameters that should not affect cache identity — fragment the cache and lower hit rates. A KVS-backed normalization function turns those variants into a single canonical form before the cache key is computed.10.1 The Data Layout

The key insight is that the rules for what to normalize are themselves data. Store one key per ruleset:Key: norm:strip_qs Value: ["utm_source","utm_medium","utm_campaign","fbclid","gclid"] Key: norm:lowercase_paths Value: ["/static","/images","/assets"]

Updating which tracking parameters to strip becomes a

put-key, not a function deploy.10.2 The Function

import cf from 'cloudfront';

const kvs = cf.kvs('EXAMPLEa1b2c3d4-e5f6-7890-abcd-ef1234567890');

async function handler(event) {

const request = event.request;

// Lowercase the path for designated prefixes.

let lowercasePrefixes = [];

try {

lowercasePrefixes = JSON.parse(await kvs.get('norm:lowercase_paths'));

} catch (_) {}

if (lowercasePrefixes.some(p => request.uri.startsWith(p))) {

request.uri = request.uri.toLowerCase();

}

// Strip designated tracking query parameters.

let stripList = [];

try {

stripList = JSON.parse(await kvs.get('norm:strip_qs'));

} catch (_) {}

if (request.querystring && stripList.length > 0) {

for (const param of stripList) {

delete request.querystring[param];

}

}

return request;

}

request.querystring is an object of { key: { value, multiValue?: [{ value }] } }. Use delete request.querystring[key] to remove a parameter.After the function returns, CloudFront computes the cache key from the (now-normalized) request, and tracking-parameter-only differences collapse to the same cache entry.

10.3 Operational Notes

The cache hit rate improvement from this pattern is most visible on traffic from email campaigns and ad networks, both of which append tracking parameters that the origin does not actually consume. Measure the hit rate before and after to confirm the gain; if the origin does consume one of the parameters, removing it at the edge will cause silent functional bugs. Always coordinate the strip list with the origin team.11. Pattern 8: Rate-Limit Hint Via Edge Bucketing

Origins often need to make per-IP or per-account rate-limit decisions but cannot afford to maintain hot per-IP state themselves. A CloudFront Function can pre-compute a coarse bucket for each request — by IP, by ASN, by API key — and pass it to the origin in a header. The origin then uses the bucket as a partition key for its own rate-limit logic. KVS holds the bucket-rule configuration and any small allow/deny modifiers.11.1 The Data Layout

Two complementary keys:

Key: rl:rules

Value: {"ip_bucket_bits":24,"ipv6_bucket_bits":64,"high_priority_asns":[15169,32934]}

Key: rl:multipliers

Value: {"trusted_partners":[15169],"untrusted_asns":[]}

The

ip_bucket_bits controls how aggressively IPv4 addresses are folded into buckets — 24 means /24 buckets, 32 means per-IP. ipv6_bucket_bits plays the same role for IPv6, defaulting to /64, which is typically the largest single-customer allocation a residential or mobile ISP hands out and therefore a sensible default partition size. The list of high-priority ASNs gets a higher rate-limit class at the origin.11.2 The Function

Note: CloudFront Functions does not natively expose ASN data on viewer requests. Thex-viewer-asn header used below is a custom header populated by upstream enrichment, not a native CloudFront header. Two practical implementation options:- Lambda@Edge with a bundled IP-to-ASN database: a viewer-request Lambda@Edge function that runs before the CloudFront Function looks up the viewer IP against a MaxMind GeoLite2 ASN database (or equivalent) bundled in the Lambda deployment package, then attaches

x-viewer-asnas a request header. Database refresh is a Lambda redeploy. - Origin-side enrichment with KVS feedback: the origin classifies the IP-to-ASN mapping after the first request and writes the result to KVS keyed by IP prefix, so subsequent requests from the same prefix get

x-viewer-asnset by a CloudFront Function that reads KVS. This trades freshness for simplicity and avoids the Lambda@Edge cost on every request.

The function below assumes the header has already been populated by one of these mechanisms; if you have not implemented the enrichment, the function still works but the high-priority ASN branch becomes a no-op.

import cf from 'cloudfront';

const kvs = cf.kvs('EXAMPLEa1b2c3d4-e5f6-7890-abcd-ef1234567890');

// cloudfront-viewer-address formats: "198.51.100.10:46532" (IPv4) or

// "[2001:db8::1]:46532" (IPv6). A naive split(':') corrupts IPv6.

function extractViewerIp(rawValue) {

if (!rawValue) return '';

if (rawValue.startsWith('[')) {

const end = rawValue.indexOf(']');

return end > 0 ? rawValue.slice(1, end) : '';

}

const colon = rawValue.lastIndexOf(':');

return colon > 0 ? rawValue.slice(0, colon) : rawValue;

}

function ipv4Bucket(ip, bits) {

const parts = ip.split('.').map(n => parseInt(n, 10));

if (parts.length !== 4 || parts.some(isNaN)) return null;

let n = (parts[0] << 24) | (parts[1] << 16) | (parts[2] << 8) | parts[3];

n = n & (-1 << (32 - bits));

return [

(n >>> 24) & 0xff,

(n >>> 16) & 0xff,

(n >>> 8) & 0xff,

n & 0xff

].join('.') + '/' + bits;

}

// Coarse IPv6 bucketing by /N prefix. Splits the address on ':', keeps the

// first ceil(N/16) groups, and reconstructs as "g1:g2:...::/N". This is

// approximate — full address normalization (e.g., expanding "::") is left to

// the origin, which has more compute headroom for it.

function ipv6Bucket(ip, bits) {

const groups = ip.split(':');

if (groups.length < 3) return null;

const keep = Math.ceil(bits / 16);

return groups.slice(0, keep).join(':') + '::/' + bits;

}

async function handler(event) {

const request = event.request;

const ip = extractViewerIp(request.headers['cloudfront-viewer-address']?.value);

// Note: CloudFront does not natively add a viewer-ASN header.

// ASN-based classification requires Lambda@Edge with an external lookup or KVS-stored data.

// The 'x-viewer-asn' header below is a placeholder populated by such upstream enrichment.

const asn = request.headers['x-viewer-asn']?.value || '';

let rules = { ip_bucket_bits: 24, ipv6_bucket_bits: 64, high_priority_asns: [] };

try { rules = Object.assign(rules, JSON.parse(await kvs.get('rl:rules'))); } catch (_) {}

let bucket = ip;

if (ip.includes(':')) {

bucket = ipv6Bucket(ip, rules.ipv6_bucket_bits) || ip;

} else if (ip) {

bucket = ipv4Bucket(ip, rules.ip_bucket_bits) || ip;

}

const klass = rules.high_priority_asns.includes(parseInt(asn, 10)) ? 'high' : 'std';

request.headers['x-rl-bucket'] = { value: bucket };

request.headers['x-rl-class'] = { value: klass };

return request;

}

The origin's rate limiter keys on

x-rl-bucket for the partition and uses x-rl-class to pick the policy — for example, a rate-limiting middleware at the origin applies a higher request-per-minute threshold for x-rl-class: 'high' (trusted ASNs) and a stricter threshold for x-rl-class: 'std' (all other traffic). The function does the cheap deterministic part on every request; the origin does the stateful counter increment, which is the part that actually requires consistency.11.3 Operational Notes

The function is not a rate limiter — it is an input to one. AWS WAF rate-based rules and origin-side limiters do the actual blocking. The benefit of this pattern is that the origin gets a stable, normalized partition key that already reflects the operator's intent (which ASNs are friendly, what IP granularity to use), without the origin having to know any of that itself. Updates to either KVS key propagate to all edges in seconds and require no deploy.12. Operational Considerations

Patterns are easy on a slide; the operational reality of running KVS in production is shaped by a few specific gotchas that are worth calling out cross-pattern.12.1 KVS Update Propagation Time

KVS reads and the 1 ms budget: KVS reads from inside a CloudFront Function are treated as local lookups, and although they are billed separately from Function invocations (at $0.03 per 1 million reads), they do not count against the 1 ms compute budget that determines Function throttling. In practice, however, multiple sequentialawait kvs.get() calls within a single invocation can accumulate wall-clock time. If your function performs more than two or three KVS lookups per request, measure actual p99 compute utilization in CloudWatch to confirm you are not approaching the throttle threshold — and remember that each extra read also adds to the per-million KVS-read line item on the bill.Updates to a key are typically visible within a few seconds at every edge, though AWS does not publish a numeric SLO for propagation. The propagation is eventually consistent and the actual time depends on edge location and current load — observe and re-verify against the current AWS documentation before depending on a specific number. Two implications follow. First, do not use KVS as a synchronous control surface for anything that needs to take effect "now" — always pair edge KVS reads with origin-side enforcement for security-sensitive decisions. Second, use the propagation window deliberately: when ramping an A/B experiment from 10% to 30%, the brief window where some edges show the new value and others the old is usually acceptable, but for a kill switch it is not, so design the kill switch to be a separate flag that has been in place since the experiment started.

12.2 Deployment Automation and Versioning

KVS does not have native versioning. When you write a key, the previous value is gone. This means every change should go through a controlled pipeline that:- Commits the desired KVS state to source control (a JSON or YAML file in a Git repository).

- Computes the diff against the current live state by listing keys via the API.

- Applies the diff with ETag-checked writes, with deletions explicit rather than implicit.

- Records the change in a deployment log alongside the human or automation that triggered it.

A practical bound on the diff-apply step: a single

UpdateKeys API request accepts at most 50 key-value operations or a 3 MB payload, whichever is reached first (re-confirm the latest values against the UpdateKeys API reference before depending on a specific number). For large rotations, chunk the diff into multiple requests; for typical incremental changes, the cap is rarely binding.Without this, you will eventually have an outage caused by "someone updated a flag from a console" and no one being able to reconstruct what changed. Treat KVS like database state: schema-managed, diff-applied, and rollback-able.

12.3 Rollback Strategy

To roll back, re-apply the previous JSON snapshot through the same diff pipeline. Because the propagation window is short, the perceived rollback time at the edge is dominated by your pipeline latency, not by KVS itself. Keep the pipeline fast — under one minute end-to-end is achievable and worth the investment.12.4 Observability

CloudFront publishes per-Function invocation metrics and per-Function compute utilization metrics to CloudWatch. KVS itself reports key count, total store size, and write API call metrics. The combination you actually want to watch in production:| Metric | Source | Why |

|---|---|---|

| Function invocations / sec | CloudWatch | Cost driver, also a request-volume canary |

| Function compute utilization (p99) | CloudWatch | If close to 1 ms, you are at risk of throttling |

| KVS total size | CloudFront API | Approaching 5 MB requires immediate rotation/sharding |

| KVS write API errors (4xx, 5xx) | CloudTrail / API logs | Catches ETag races and propagation issues |

| Function-emitted log lines (when enabled) | CloudWatch Logs | Required for debugging a misbehaving rule |

CloudFront Functions logging is opt-in and adds cost; enable it during initial rollout of each new pattern, then turn it off for steady-state if cost matters.

12.5 Staging KVS Changes Before Production

A reliable practice is to stage every KVS change against a non-production CloudFront distribution that uses a separate KVS, run a synthetic check that exercises every code path in the function, then promote the JSON snapshot to the production KVS. This is the same discipline you would apply to any other config-as-data system; the one KVS-specific element is that the staging KVS must be associated with a separate function (since one function reads at most one KVS), so promoting code is a separate step from promoting data.13. Cost Analysis

The cost picture for the patterns in this article has three components. Each is small individually; the lever is in which pattern replaces which alternative.13.1 Per-Pattern Cost Drivers

CloudFront Functions are billed per million invocations at a flat rate (approximately$0.10 per million as of writing; reconfirm against the current pricing page). KVS reads from inside a Function are billed at a separate, much lower rate — approximately $0.03 per 1 million reads — so a Function that performs one read per invocation adds roughly 30% on top of the Function invocation cost, and a Function that performs three reads per invocation roughly doubles the per-request bill (still an order of magnitude below Lambda@Edge). KVS writes and other non-read control-plane API calls are billed at a separate, higher per-request rate than reads (re-confirm against aws.amazon.com/cloudfront/pricing/; this article does not pin a specific number because the published rate has changed since launch), but write volume is dominated by deploy cadence rather than viewer traffic, so it rarely shows up on the bill. At the 5 MB store cap, KVS storage cost is negligible.Lambda@Edge, by contrast, is billed per request and per GB-second of compute, with a different per-million rate for invocations and a separate compute rate. The exact numbers move; the structural fact is that Lambda@Edge is roughly an order of magnitude more expensive per million viewer-request invocations than CloudFront Functions, and the gap widens as Lambda@Edge runs for longer.

13.2 The Replacement Math

For a viewer-request workload of 10 billion requests per month — a medium-large public website — moving a workload that previously used Lambda@Edge to a CloudFront Function backed by one KVS read per request saves on the order of ~$4,700 per month at current pricing: Lambda@Edge viewer-request invocations at $0.60/M cost $6,000/mo; CloudFront Functions at $0.10/M cost $1,000/mo; one KVS read per request at $0.03/M adds $300/mo; net savings $6,000 − ($1,000 + $300) = ~$4,700/mo. (Lambda@Edge also has a per-GB-second compute charge that this comparison omits, so the true gap is somewhat wider; reconfirm against aws.amazon.com/cloudfront/pricing/ before depending on a specific number.) This is the headline reason to adopt the patterns in this article. The patterns also reduce origin compute for cases where the function now handles a redirect or 401 that previously round-tripped to the origin.13.3 The Hidden Cost

The cost rarely captured in the pricing sheet is the operational overhead of maintaining KVS state. Pipelines, audits, alarms, and runbooks all need to exist. The first KVS-backed function in an organization usually costs more in engineering time than it saves in invocation cost; the second and third pay back the investment quickly because the same pipeline serves them.14. Summary

CloudFront KeyValueStore turns a previously awkward category of edge logic — "small mutable lookup tables that change without redeploys" — into a first-class capability of CloudFront Functions. Across the eight patterns in this article, the same shape recurs: keep the function short and stateless, push the data into KVS, and treat code deploys and data rotations as independent operations.The shortest answer to "should I use KVS?" is the following decision tree. Use CloudFront Functions alone when the logic is genuinely stateless (URL canonicalization, header rewrites, redirect-to-HTTPS). Use CloudFront Functions + KVS when the decision is data-driven, the data fits in 5 MB, eventual consistency is acceptable, and the per-request budget matters — A/B tests, geo routing, feature flags, allow-lists, JWT-HS256 gating, cache-key normalization, and rate-limit hinting all fit here. Use Lambda@Edge when you need network calls, body manipulation, asymmetric JWT verification (RS256/ES256), or claim logic richer than a few fields.

A few cross-pattern principles deserve to be carried forward into any KVS adoption:

- Always fail open in viewer-request KVS reads, except when the pattern is explicitly fail-secure (auth bypass lists, security gates). A missing key during a propagation window must not drop legitimate traffic.

- Never make KVS the sole authority for security decisions. KVS is eventually consistent and cached at edges; pair every edge-side check with origin-side enforcement for anything that affects authorization, billing, or anti-replay.

- Manage KVS state like database state. Source-control the desired keyset, apply diffs through ETag-checked pipelines, and stage every change against a non-production distribution before promotion.

- Watch the load-bearing limits. The 5 MB total store cap, the 1 KB value cap, and the 50-stores-per-account ceiling are the constraints that actually shape what you can put in KVS — design within them rather than around them.

- Quantify the savings before adopting. The Lambda@Edge → CloudFront Functions + KVS migration is roughly an order-of-magnitude per-million reduction at viewer-request volume, but the operational overhead of running a KVS pipeline is real — the second and third use cases pay back the investment, the first one rarely does on its own.

KVS is not a database, and the patterns in this article are explicitly designed around its constraints. Used within those constraints, it shifts a meaningful slice of edge logic from "expensive Lambda@Edge" to "cheap CloudFront Functions" without sacrificing the operational property that matters most: the ability to change behavior without redeploying code.

15. References

- Amazon CloudFront KeyValueStore Documentation

- Working With CloudFront Functions

- Lambda@Edge Documentation

- CloudFront Functions JavaScript Runtime 2.0 Reference

- CloudFront KeyValueStore API Reference

- Amazon CloudFront Pricing

- Add CloudFront WAF, Edge, ACM to a Custom Origin Like AWS Amplify Hosting

- AWS PrivateLink and VPC Endpoints Complete Guide

- AWS WAF for Generative AI and Prompt Injection Defense Patterns

References:

Tech Blog with curated related content

Written by Hidekazu Konishi